Hand Talk announces groundbreaking technology for movement recognition in sign languages

On August 12th, we finalized the 4th edition of our annual event, Link 2021 – Digital Accessibility Festival, where we shared some big news with the public during a presentation from our CEO, Ronaldo Tenório and Thadeu Luz, our CAIO.

We already are a reference in translation from written to sign languages, and now, we open a new path to increase our global impact even more. The event’s huge news was the creation of an assistive technology for signs recognition, that uses Artificial Intelligence as a movement detector.

Those who follow us know that our mission is to break communication barriers between the deaf and hearers with automatic Sign Language translation, through the use of technology. Beyond that, we have been awarded as the best social app in the world by the UN!

Hand Talk Motion

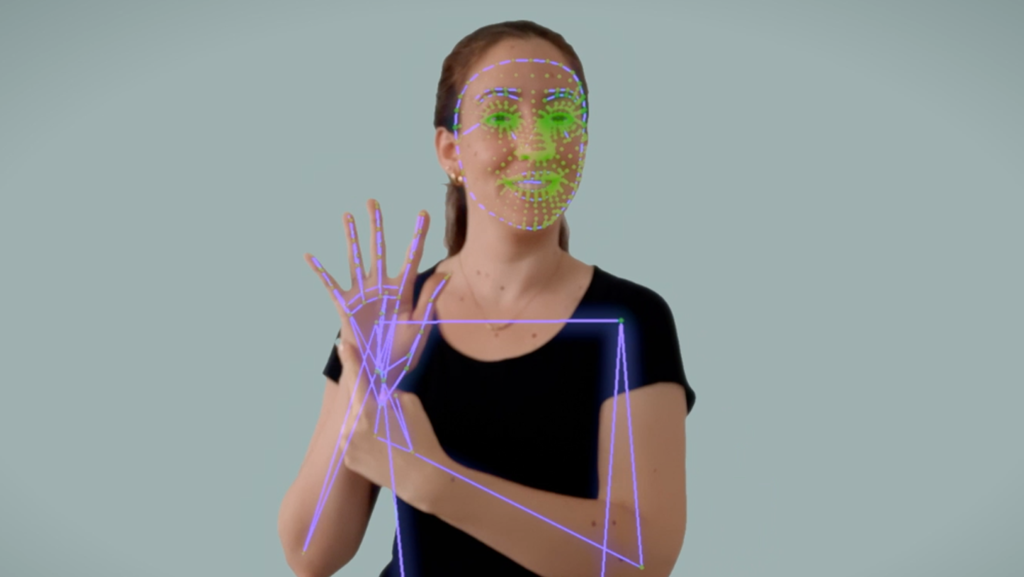

So far, we offered solutions that followed a single direction: from written to sign language. Be that on the app (text and audio) or on the website accessibility plugin (text and images), Hugo and Maya could only translate content to a sign language. That was essential to spread and facilitate the communication between the deaf community and the hearing, but what if the reverse direction was also possible? After over 2 years of research, we are extremely happy to publicly present the Motion technology, that aims to operate the inverse translation for sign languages all over the world!

“Hand Talk Motion is a long time dream coming true: the translation from sign languages to oral and written languages, that way completing the two-way communication cycle”, explains Thadeu. To see Motion in action, check out the explanatory video in our YouTube channel that demonstrates the technology being used in ASL (American Sign Language) and Libras (Brazilian Sign Language).

An accessible innovation

In the past years, multiple simple experiments were being developed around the world, with the goal to recognize sign languages through static images or simple movements. The Motion technology goes beyond: it succeeds in recognizing signs and longer sentences with such quality and precision, that it could even understand and translate different contexts and regionalisms in the future.

With those advances that our Artificial Intelligence team presented during the event, it is possible to build, in the future, translation models for all oral and sign language pairs existent, that may be used in the entire world!

Collaboration in data collection

Another big news that we announced during the event was Hand Talk Community, a collaborative data collection platform that fuels our Artificial Intelligence machine. Now, we are transforming a tool that was once for internal use only, and open it to volunteers fluent in sign languages all over the world. This way, people can help us reach more countries, and Hugo and Maya can learn to communicate in any sign language. Awesome, right? With the opening of the platform to the public, the expectation is to break communication barriers between deaf and hearers in less than half the time thought before. If you are fluent in sign language and want to be a volunteer, access this page.

A long time dream

“I usually say that very often deaf people live as foreigners in their own countries, because there is a huge communication barrier between deaf and hearing people. We have worked for a long time in research so that technology could allow us to build this disruption. So far, our solutions made it possible for deaf people to access information that were exclusive to hearers, now the technology empowers them to actively participate”, comments Ronaldo.

The launch of the Motion technology is very exciting and soon will be available in different products we offer. Sign ups to be a part of the Community volunteer network are already open and accepting subscriptions from around the world. Come and help us make the world more accessible!